Historian Yuval Noah Harari steps into the future — by speaking as a hologram at TED2018: The Age of Amazement, April 11, 2018, in Vancouver. Photo: Bret Hartman / TED

After the collapse of the Soviet Union, says TED Curator Chris Anderson as he opens Session 2, “we used to think that democratically-powered capitalism was supposed to take over the world.” Political scientist Francis Fukuyama famously called it the “end of history.” So now what happens? Five TED speakers come to the stage to talk about the possibilities and perils that lie ahead.

The temptations of fascism. In his 2017 book Homo Deus, historian Yuval Noah Harari predicted that humanity would become digital in the future — but he didn’t think it would happen this quickly, or to him. To deliver his talk, Harari appears as a digital avatar projection live from Tel Aviv, Israel. These days, he says, the term “fascism” is frequently tossed around. But Harari explains we’ve forgotten what fascism actually is; many of us use it as a synonym for nationalism. But nationalism and fascism are quite different: the former can make allies out of strangers, telling us that our nation is unique and we have a special obligation towards it and each other; the latter tells us that our nation is supreme and we don’t have to care about anyone or anything else. But while fascists formerly took power by controlling land and machines, today’s threat comes from governments or corporations controlling another commodity. “The greatest danger that now faces liberal democracy is that the revolution in information technology will make dictatorships more efficient than democracies,” Harari says. With the rise of AI, centralized data processing could give dictatorships a critical advantage over relatively decentralized democracies. So what can we do to prevent this possibility? If you’re an engineer, find ways to prevent too much data from being concentrated in too few hands, Harari suggests. And non-engineers should think about how to avoid being manipulated by those who control the data. “It’s the responsibility of all of us to get to know our weaknesses, and make sure they don’t become weapons in the hands of enemies of democracy,” he says. In a brief post-talk Q & A, Harari stresses the need to remain vigilant against tech companies that own our data and not assume that market incentives will prevent abuses. “In theory, you can rise against corporations but in practice it is extremely difficult,” he warns.

Being part of a democracy involves a lot of decisions — one reason we as citizens assign some of those decisions to our representatives. César Hidalgo has a bold — and in fact a pretty out-there — idea to make it work better. Photo: Bret Hartman / TED

Let’s automate democracy. There’s a fundamental weakness with representative democracy, says MIT physics professor César Hidalgo. It’s, well, that it’s representative, depending on the participation of people who can be manipulated, act inefficiently, or simply not show up at the polls. He, like other concerned citizens, wants to make sure we have elected governments that truly represent our values and wishes. His radical solution: what if scientists could create an AI that votes for you? Hidalgo envisions a “digital Jiminy Cricket that is able to answer questions on your behalf and make better decisions at larger scale.” Each voter could teach her own AI how to think like her, using quizzes, reading lists and other types of data. He is careful to say that this process would be aboveboard: in the kind of system he posits, data would not be used against you but as a way to best learn about your opinions. The idea is, once you’ve trained your AI and validated a few of the decisions it makes for you, you could leave it on autopilot, voting and advocating for you … or you could choose to approve every decision it suggests. It’s easy to poke holes in his idea, but Hidalgo believes it’s worth trying out on a small scale. His bottom line: “Democracy has a very bad user interface. If you can improve the user interface, you might be able to use it more.”

Poppy Crum studies how we express emotions — and suggests that the era of the poker face may be coming to an end, as new technologies allow us to know other people’s physiological responses. Photo: Bret Hartman / TED

Tech that can tell what you’re feeling. We like to think we have complete control over what other people see, know and understand about our internal world. But Poppy Crum, chief scientist at Dolby Laboratories, poses a provocative question: Do we actually possess that control, and even if we do, how long will it last? “Today’s technology is starting to make it really easy to see the signals and tells that give us away,” she says. She explains that we can learn a great deal about a person’s internal state by pairing sensors with machine learning. For example, infrared thermal imaging can reveal changes in our stress level, how hard our brain is working, and whether we’re fully engaged in what we’re doing. To make her point, she gives attendees a quick fright by showing a startling clip from horror film The Woman in Black. Using tubes embedded in the theater, she shows a real-time data visualization of CO2 in the room, pinpointing the moment the audience collectively jumped — and vividly displaying how a chemical analysis of our breath can betray our feelings. While this kind of technology may sound terrifying and Big Brother-ish, Crum believes it can help us, by making us more connected. “I’m not looking to create a world where our inner lives are ripped open and our personal data and privacy given away to people and entities where we don’t want it to go,” she explains, “but I am looking to create a world where we can care about each other more effectively, we can know more about when someone is feeling something that we ought to pay attention to, and we can have richer experiences of our technology.” In a short Q&A, Crum discusses the absence of rules governing this kind of technology. “I’m a believer that we have to step full force in and think about all the ways that this can be used to enable us to develop the right control over it,” she says.

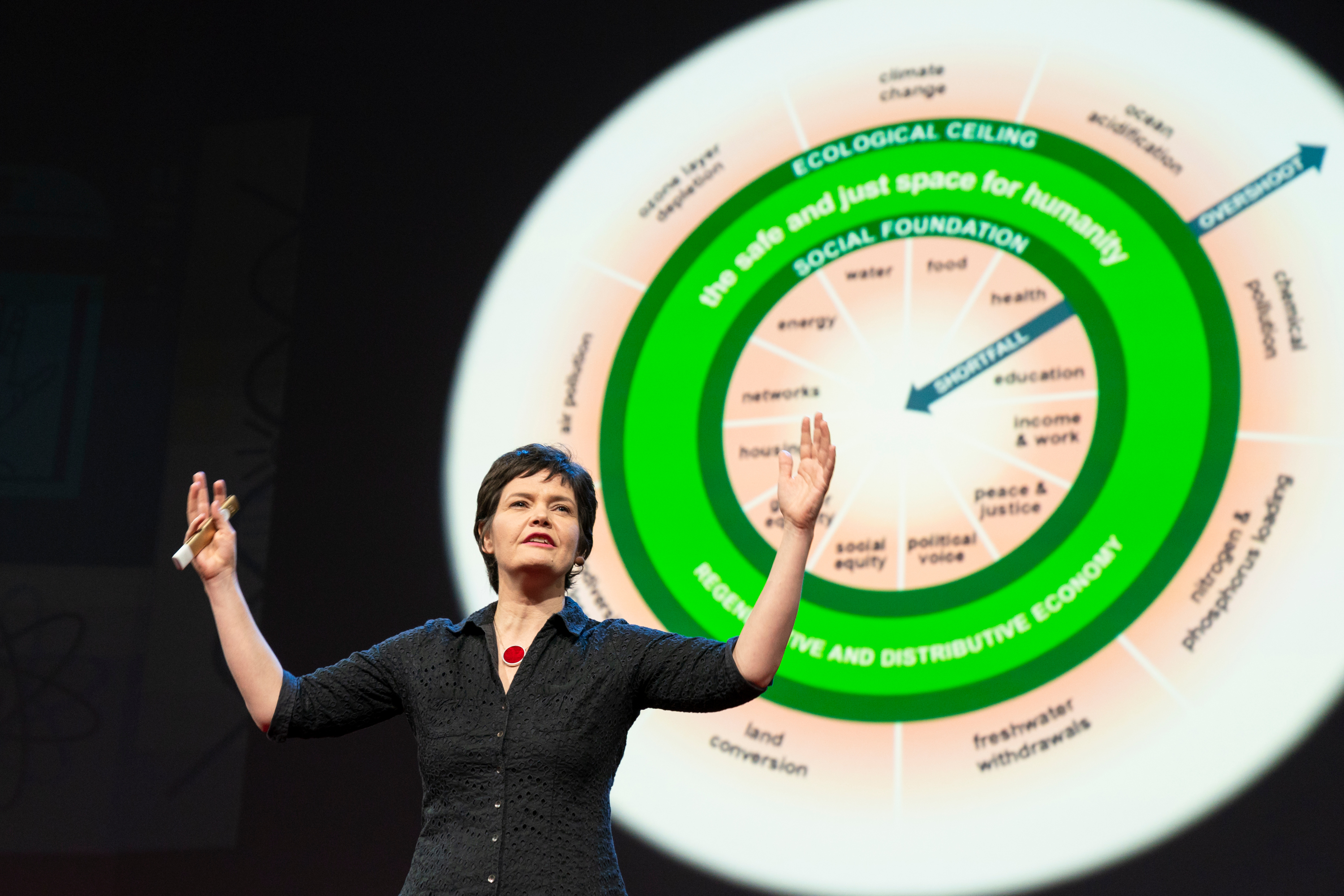

The economic theories of Kate Raworth can be summed up by this doughnut: a space of stability, prosperity and balance that does not demand unchecked growth. Photo: Bret Hartman / TED

Reimagining the shape of progress. “From your children’s feet to the Amazon forest, nothing in nature grows forever,” says Oxford economist Kate Raworth. “So why would we imagine our economies as the one system that can buck this trend?” Since the mid-20th century, a single number has held nations — from policymakers to individuals — in its thrall: gross domestic product, or GDP. Like children blowing a soap bubble, we pray that it keeps growing and growing. Our economies have become “financially, politically and socially addicted” to relentless GDP growth, despite the fact that it’s impossible to achieve and too many people and the planet are being pummeled in the process. What would a sustainable, universally beneficial economy look like? A doughnut, says Raworth (this diagram clarifies her analogy). She says we should strive to move countries out of the hole — “the place where people are falling short on life’s essentials” like food, water, healthcare and housing — and onto the doughnut itself. But we shouldn’t move too far lest we end up on the doughnut’s outside and bust through the planet’s ecological limits. Ultimately, our economies need to become “regenerative and distributive by design,” says Raworth. The former means working with and within the natural cycles of the earth; the latter, spreading the sources of wealth and production into the hands of many. “We have inherited economies that need to grow, whether or not they make us thrive,” she explains. “Today — in the world’s richest countries — we need economies that make us thrive, whether or not they grow. (Read an excerpt from her book Doughnut Economics at TED Ideas.)

If we’re building ever more powerful AIs, says Max Tegmark, we should start thinking now about how to prevent the worst outcomes we can imagine. Because, as he suggests, AI is one of those technologies — like nuclear power and synthetic biology — where trial-and-error won’t really work. It’s best to get this one right the first time. Photo: Bret Hartman / TED

Getting ambitious about AI. “I want to avoid this silly carbon chauvinism idea that you can only be smart if you’re made of meat,” says MIT physicist Max Tegmark. As he sees it, humanity has two options as we move closer to a world where artificially intelligent machines can do everything better and cheaper than we can. Option #1: We could be complacent and not worry about the consequences as we build our technology. Or, Option #2: We could be ambitious and envision a truly inspiring future, then figure out how to steer towards it. The smarter choice is clear, but to do that, we need to overhaul our attitudes. We currently function with a reactive “learning from mistakes” mindset; instead, we must be proactive as we develop powerful technology like nuclear weapons — because our first mistake may be our last. Yes, it’s a powerful responsibility, but our destiny lies in our hands. “We’re all here to celebrate the age of amazement,” says Tegmark. “I think that the essence of the age of amazement should lie in becoming not overpowered, but empowered by our technology.”